Before you deploy AI to handle claims, deploy it to review them. The case for making claims audit your AI pilot — and why it changes everything that comes after

May 11, 2026

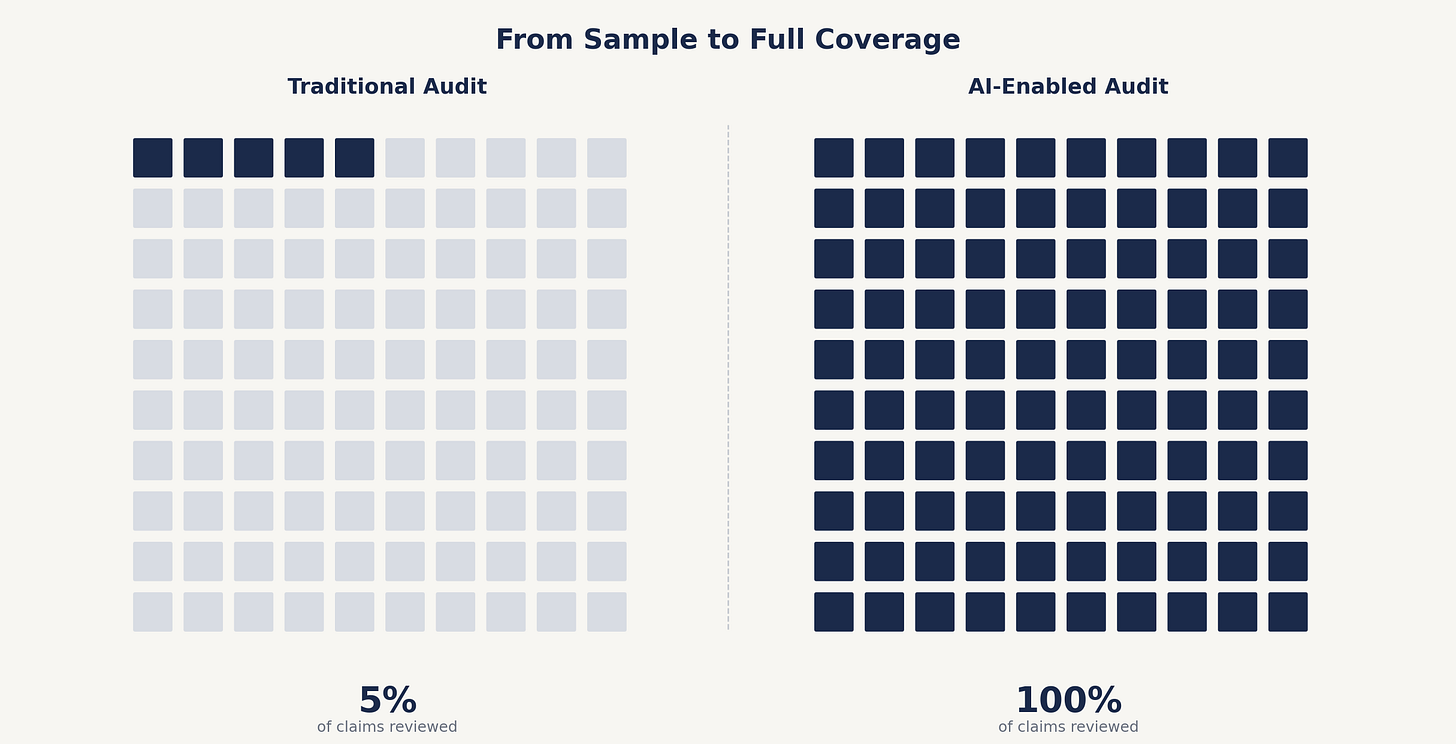

Every claims executive I’ve worked with over the last 25 years has lived with the same compromise. You audit a small sample of files because you can’t afford to read them all. You run audits quarterly because that’s the fastest a manual review cycle can responsibly turn. Findings land five or six weeks after the work was done, by which point the adjuster has handled a hundred more files and moved on. Nobody thinks this is ideal. Everyone agrees it’s the best you can do with the tools available.

Those tools haven’t fundamentally changed in thirty years.

This is no longer the case with AI. The carriers and TPAs that figure this out early aren’t going to just modernize a function, those organizations are going to see things in their own operations they’ve never been able to see before.

Here’s the thesis: the most defensible place to deploy AI in a claims operation isn’t FNOL, isn’t triage, isn’t reserves. It’s audit. Not because audit is sexy. Because audit is where AI’s value compounds the fastest, the risk is lowest, and the result is visibility you can’t get any other way.

What audit has always been and why

Traditional audit is a five-step compromise. You pull a sample. You read files. You score them against criteria. You write findings. You distribute the report. By the time it lands in front of someone who could act on it, the audit period is a quarter or more in the rearview mirror.

This isn’t a methodology problem. It is a math problem. An audit team can’t read two hundred files a month by hand and do it well. So you sampled, you scored, and you accepted that audit was directionally useful and operationally incomplete.

A recent Sedgwick analysis found that nearly two-thirds of carriers see a gap between their AI vision and their AI reality, with most pointing to inconsistent or siloed data as the root cause. That’s not an AI problem. That’s the same data quality problem audit has been flagging for decades. The difference now is that you have a tool capable of doing something about it and audit is the right place to point it.

What AI actually changes about audit

Three concrete things shift when AI shows up in the audit function.

Sample size stops being the constraint. If you can read every file at roughly the cost of reading some files, the question isn’t “what’s our sample size?” it’s “what are we looking for?” That’s a better conversation. It moves audit from statistical inference to direct observation. You’re no longer estimating what’s happening across the book. You’re seeing it.

Findings arrive in days, not weeks. The five-to-six week reporting lag is the single biggest reason audit findings rarely change behavior. When a finding finally hits the desk of a claims manager, the file in question is closed and the adjuster has moved on. Continuous review changes the feedback loop entirely. An issue identified Tuesday can be addressed Wednesday. That’s the kind of change that moves loss costs.

The audit can ask new questions. Sampling forced audit to ask broad questions across small populations. AI lets you ask narrow questions across everything. Are reserves being set consistently across adjusters in the same line of business? Are settlement authorities being respected on cases above a certain threshold? Are diary entries being made on the dates they claim, or are they being backfilled? These are questions that quarterly sampling couldn’t reliably answer. They’re answerable now.

A note on risk. PwC published a useful piece earlier this year flagging that AI models can generate incorrect summaries, misinterpret claimant statements, and occasionally introduce data points that weren’t in the record at all. That’s a real concern, and anyone selling AI in claims who waves it off shouldn’t be trusted. The way you handle that risk is by deploying AI in the function specifically designed to catch errors.

Audit doesn’t pay claims. It doesn’t set reserves. It doesn’t write coverage opinions. It reviews them and AI is very good at doing it at helping to make that happen. Using AI to support a review function with human auditors validating findings before they’re acted on is the lowest-stakes way to introduce AI into a claims operation while building the organizational muscle to evaluate it.

What this looks like in practice

A few principles for how to actually do this, without pretending it’s a one-quarter project.

Start narrow. Pick one line of business and one set of audit criteria. High-volume lines like workers’ comp or auto property tend to be the best starting point because that’s where the gap between what auditors see and what’s actually happening is widest. Run AI-enabled review in parallel with your existing sampling approach for a quarter or two. Compare findings. Adjust criteria. Build confidence before you scale.

Define what good looks like before you deploy. This is where most AI projects in claims quietly fail. AI can enhance audit quality when the deployment defines the context and when audit criteria is documented, tested against historical findings, and validated before AI runs against live files. The work isn’t optional. It’s the work.

Treat AI-generated findings as drafts, not verdicts. The auditor’s role doesn’t disappear in this model it elevates. With an AI tool auditors stop being readers and start being reviewers. They spend their time on the small percentage of findings that require real judgment instead of the much larger percentage of files that confirm the operation is running correctly.

What’s actually on the table

The carriers and TPAs that move on this first aren’t going to win because they’re faster auditors. They’re going to win because they finally have visibility into their own operations that the industry has been promising for thirty years and not delivering.

That’s the unlock. Not faster audits. Better visibility into how the business actually runs.

This is the work the Claims Audit Portal was built for, AI-aware audit criteria, and findings delivered in days instead of weeks. But the product is downstream of the bet. The bet is that audit is the right place for most claims operations to start with AI. Not because AI is risky everywhere else, but because audit is where AI’s value compounds the fastest and where the organizational learning is most transferable to everything that comes next.

The first AI project that earns its keep is the one that proves the rest are working.